Since the beginning of 2020, I have run more than 100 user testing sessions. Due to the pandemic, a vast number of those have been done remotely over Zoom. In this piece, I reflect on some of my experiences and share how I’ve evolved my approach.

People want to talk to you about their experiences

It is often thought that you have to pay people to get them to give you feedback on your product. While I do believe in compensating people for their time and have paid people for feedback in the past, I no longer lead with this.

When collecting different user perspectives about a product, we often speak about the importance of focusing on a tightly defined persona. Sometimes we even go a step further and say that we want to focus on the subset that represents our first customers, the innovators. With this in mind, I often wonder: if somebody is incentivised by a £50 Amazon voucher, do they fit into the same user persona as the person that has been longing for the product that you are building? Does including people like this in our discovery sessions corrupt our data?

My current perspective is that one of the best ways to compensate potential/current users for their time is by being fully present during your sessions. I recall a user interview I did last year with a woman in Singapore. Our first scheduled session didn’t go to plan because she had internet connectivity issues, but she persevered to sort that out ahead of our rescheduled session. Halfway through it, she shared with me a couple of things that have impacted the way I now treat user testing sessions:

- She was so happy to be able to share her views on the problem space with someone who was able to do something about the issues she was having;

- She would talk to me all day if she could as she hadn’t had much contact with people during the pandemic.

I have come to realise that user research and testing sessions are cathartic. In many ways, we are therapists for the users of our products and we owe them the respect of listening to them, respecting their views, taking considered action and, most importantly, communicating back to them what we plan to do with what they shared with us.

Parallels between user testing and meditation

Speaking with a lot of my peers who operate as Product Managers, I sometimes hear them say things like “I love talking to users, however, once the product development process gets started, it often takes the backseat to everything else”. This is the raw truth that many in my position don’t share.

You will be hard-pressed to find a Product Manager that doesn’t value user interviews and testing, however, it is one of those things that you have to be intentional about before it becomes a habit. Product Managers are often drawn towards working on observable deliverables, and working in that manner can take us away from what really matters.

I link this to meditation. I remember when I was first introduced to the practice, I would make the remark that I don’t have enough time to meditate. What I would soon find out is that meditation is one of those things that makes more room for everything else. The same is true about user research and testing. The time you take to talk to your users will provide you with direction for your product and save you hours of internal debate about what you should do next.

Optimisations

Experience gives you the clarity that you need to refine your process. The past 18 months have given me the opportunity to improve how I prepare for sessions, extract insights and report back my findings. I will share three of my favourite optimisations that I have made.

- Test script

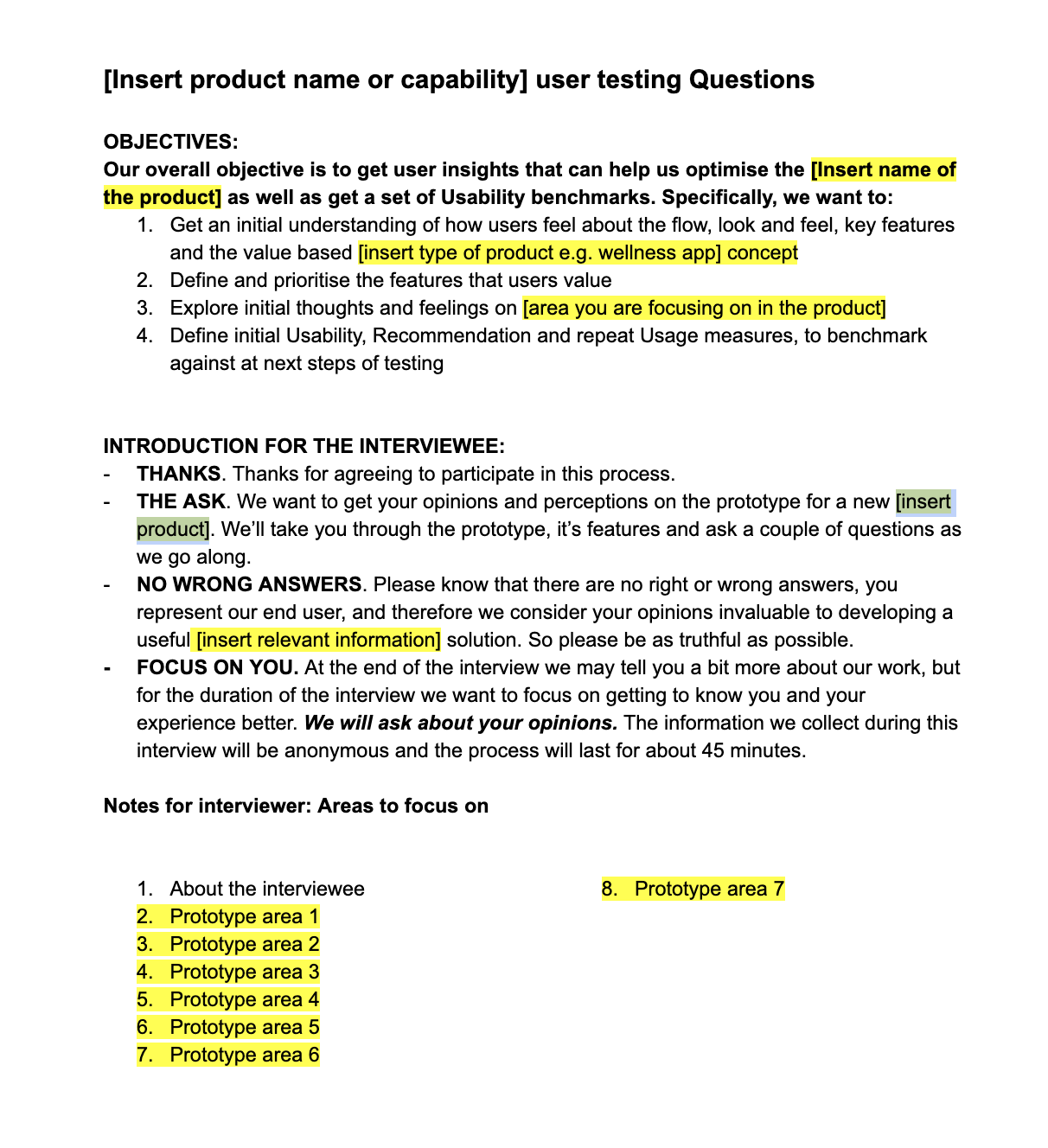

My test script has always been the foundation of my user research and testing, as is the case for many Product Managers. However, as I have grown in my role, I have started to focus more and more on consistency and repeatability.

Consistency has become more important to me as I am more conscious about how different question sequences can lead to conversations about different parts of the problem/product. In turn, this can make it difficult to identify and spot patterns. While I am an advocate for allowing the conversation to flow in these sessions, I am rooted in the belief that there should be some underlying structure. Repeatability because, as I grow in seniority, the reality is I won’t always be running these sessions, so I have to provide my colleagues with the framework to adopt a similar approach in my stead. My new test script provides an approach for framing the session and running the session with a scenario-based approach.

First page of my current test script template

First page of my current test script template

I am always searching for new tools to augment my work, but I rarely find anything that I actually want to add to my toolbox. Grain.io is one of the exceptions. Grain provides a tool that integrates with Zoom and listens to your user hugging sessions (always ask for permission before you record any session you have). Grain will capture the video recording from Zoom, transcribe the conversation, label the different speakers and allow you to create highlights and stories.

Why does this matter?

If you have ever tried to write up notes after a user research or testing session, you will know that it takes a lot of time and effort. Grain automates that process and allows you to go on to do your thematic analysis and share your insights in minutes. The product is fairly new and the team is working hard on improvements, but it is already a product that I see as a huge value add and something that I will use as part of my user hugging stack for the foreseeable future.

- Output deck

The way we share the insights we gather is what will help stakeholders to understand how they apply to the product. To get this buy-in, it is important to find a way to create a narrative that communicates the importance of recommendations you are making off the back of your learnings.

Remember, when presenting your findings, the idea isn’t to say “here's what the customer said”. The idea is to tell stakeholders “I found these things out from the customer and this is what we should do”. It is our job as product people to speak with our users and shape the product by drawing on the empathy we have for them.

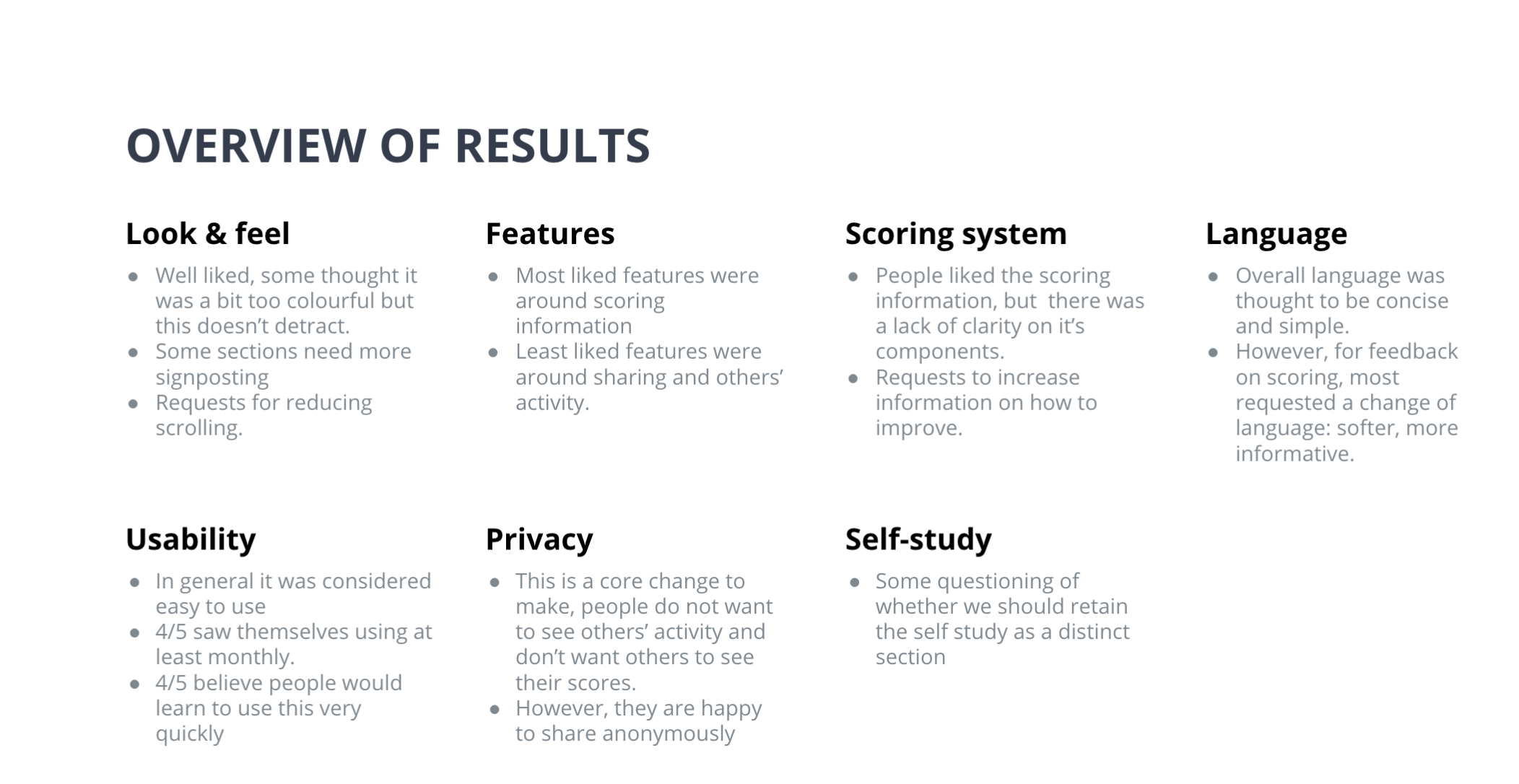

I have refined my deck to frame the goals of the user discovery, the method that I used, the scenarios I tested (along with the screens tested), the results of the user testing session and follow up survey, and - finally - my recommendations.

The output deck is something I am currently refining as I think it is a powerful artefact to share not only with decision-makers but also with internal design and development teams. I suggest you draft your own and keep working on it - it's often a constant work in progress, but a very rewarding one.